OpenAI’s O4-Mini-Deep-Research represents the convergence of two pivotal innovations: the compact yet powerful o4-mini reasoning model and the agentic Deep Research framework. Launched in June 2025, this hybrid system delivers autonomous, high-fidelity research capabilities at a fraction of the cost and latency of its full-sized counterparts. By leveraging the streamlined architecture of o4-mini within the Deep Research agent, developers and researchers can now execute extended web browsing, data synthesis, and complex analysis workflows in minutes, rather than days .

Features

- Lightweight Architecture: Utilizes the compact o4-mini variant for reduced latency and inference cost .

- Integrated Web Search: Capable of invoking search tools within its reasoning pipeline, yielding richer, up-to-date context .

- Python Interpreter Access: Supports on-the-fly code execution for mathematical proofs, data processing, and interactive querying.

- Modular Agent Design: Pluggable tool interfaces allow seamless integration with custom retrieval or external APIs, enhancing flexibility.

Technical Details

O4-Mini-Deep-Research builds on the transformer-based o4-mini model, fine-tuned under an agentic framework that orchestrates:

- Query Decomposition: Breaks down complex prompts into sub-tasks.

- Search-Augmented Reasoning: Embeds retrieval steps into its chain-of-thought, enabling real-time fact grounding.

- Self-Validation Loops: Implements self-check routines to reduce hallucination, though some inaccuracies persist.

- Interpreter Invocation: Dynamically spins up a sandboxed Python runtime for computations, raising its performance on benchmarks like AIME.

Benchmark Performance

- AIME 2025: o4-mini achieved 92.7% accuracy on the American Invitational Mathematics Examination, outperforming o3 on math reasoning tasks.

- GPQA Diamond: Scored 81.4 on Ph.D.-level science questions, demonstrating robust performance in scientific domains .

- BrowseComp Agentic Browsing: Delivered 45.6% accuracy in agentic browsing benchmarks, compared to 51.5% for deep research mode—trading some depth for speed .

Model Versioning

OpenAI publishes date-stamped model identifiers to ensure reproducibility and version control:

- o4-mini-deep-research-2025-06-26

- Future updates will follow the

<model>-<YYYY-MM-DD>convention, allowing developers to pin specific snapshots in production.

Limitations

- Time-out Constraints: Queries exceeding 600 seconds will error out and refund compute credits, emphasizing shorter, iterative research cycles .

- Depth vs. Speed Trade-off: While optimized for throughput, o4-mini-deep-research may yield less exhaustive syntheses on ultra-complex queries compared to its o3 counterpart .

- Reliance on Retrieval: Quality depends on upstream search results; missing or paywalled sources can impact completeness.

How to access o4-mini-deep-researc API

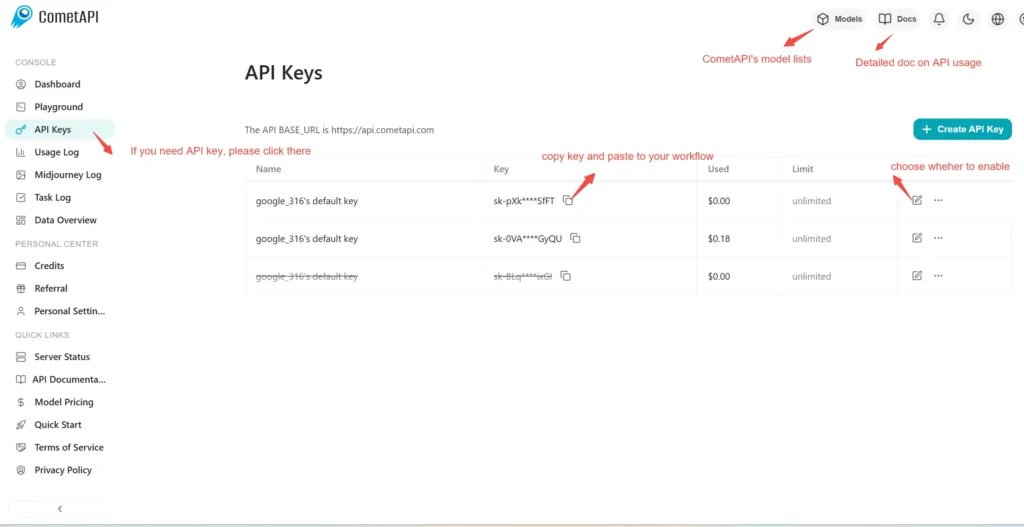

Step 1: Sign Up for API Key

Log in to cometapi.com. If you are not our user yet, please register first. Sign into your CometAPI console. Get the access credential API key of the interface. Click “Add Token” at the API token in the personal center, get the token key: sk-xxxxx and submit.

Step 2: Send Requests to o4-mini-deep-research API

Select the “\**o4-mini-deep-research\**” endpoint to send the API request and set the request body. The request method and request body are obtained from our website API doc. Our website also provides Apifox test for your convenience. Replace <YOUR_API_KEY> with your actual CometAPI key from your account.

Insert your question or request into the content field—this is what the model will respond to . Process the API response to get the generated answer.

Step 3: Retrieve and Verify Results

Process the API response to get the generated answer. After processing, the API responds with the task status and output data.